Polars Rust API tutorial

For Polars 0.43.1 作者:张德龙 zdlldine@gmail.com

本项目为学习Polars Rust API时所做的笔记。整理成book,供大家学习。本文文档解释了绝大多数的API,你可以通过本文档的指引完成绝大部分事务。 本书采用Creative Commons署名-非商业性使用许可证(CC BY-NC 4.0)。你可以非商业性地共享和改编本书,需署名原作者。

This project is a note taken while learning the Polars Rust API. Organize it into a book for everyone to learn.This document explains the vast majority of the APIs, and you can accomplish most tasks by following the guidance provided in this document. This work is licensed under the Creative Commons Attribution-NonCommercial 4.0 International License (CC BY-NC 4.0). You are free to share and adapt this work non-commercially, as long as you provide appropriate credit to the original author.

Polars Rust API Tutorial

For Polars 0.43.1

Authors:Zhang Delong zdlldine@gmail.com 2024-10-7

This work is licensed under the Creative Commons Attribution-NonCommercial 4.0 International License (CC BY-NC 4.0). You are free to share and adapt this work non-commercially, as long as you provide appropriate credit to the original author.

This project is a note taken while learning the Polars Rust API. Organize it into a book for everyone to learn.

Why use Polars Rust API? To give your Rust program high performance data processing capabilities on its own. Without the need to embed a Python environment for your program.

Compile feature settings

#cargo.toml recommended dependencies, Polars supports more features that will result in slower compilation.

[dependencies]

polars = {version="0.43.0",features=["mode","find_many","polars-io","csv","polars-ops","lazy","docs-selection","streaming","regex","temporal","is_unique","is_between","dtype-date","dtype-datetime","dtype-time","dtype-duration","dtype-categorical","rows","is_in","pivot"]}

polars-io = "0.43.0"

polars-lazy = "0.43.0"

A complete list of features: https://docs.rs/crate/polars/latest/features

| Commonly used features | Meaning |

|---|---|

| lazy | Enable the lazy API |

| regex | Support for regular regex in col() expressions |

| sql | SQL queries are supported |

| streaming | Enable data flows, which allow you to process more data than you can in memory. |

| random | Generate a randomly sampled array. |

| timezones | Time zone support |

| strings | String extraction tool |

| object | ObjectChunked, an arbitrary data type, is supported, and it uses Any trait to handle different types. |

| json | Support serialization and deserialization of JSON |

| serde | Serialization and deserialization of the SERDE library are supported |

| serde-lazy | Serialization and deserialization of the SERDE library are supported |

| sort_multiple | Multi-column sorting is supported |

| rows | Create a DataFrame from a row and extract rows from a DataFrame. Activate pivot and transpose operations. |

| The meaning of this sentence: | |

| asof_join | Supports ASOF connection1 operation. |

| cross_join | Create two DataFrames of Cartesian product2. |

| is_in | Check whether the value is in the series |

| is_between | Determine whether it is between the upper and lower bounds. |

| zip_with | Zip the two series. |

| arg_where | Returns indexes that meet the criteria |

| unique_counts | Unique value counting is supported |

| rank | Calculate the rank |

| interpolate | Interpolates the missing values of Series |

In data processing, "joining" refers to merging two or more datasets together according to some shared key or column. Typically, this connection requires that the values of the keys must match exactly. However, ASOF connection is a special type of connection that does not require the exact match of the key values, but allows the connection to be made according to the nearest key. This is especially useful when working with time series data, as you may want to connect the data to the closest point in time, rather than an exact match. For example, if you have a piece of data that contains stock prices, and each row of data has a timestamp, you may want to connect this data with another piece of data that contains economic indicators, which also has a timestamp, but the timestamps may not exactly match.

In data processing, the Cartesian product refers to all possible combinations of two datasets. For example, if you have two DataFrames, one containing rows A and B, and the other containing rows 1 and 2, the Cartesian product of these two DataFrames will contain four rows: (A, 1), (A, 2), (B, 1), (B, 2).

Basic concepts

Polars is a data analytics library written in Rust. Polars relies on the following data structures: Series, and ChunkedArray<T>, DataFrame, and lazyframe.

A DataFrame is a series of two-dimensional data structures that can be thought of as tables of data made up of rows and columns (columns, called "fields" in data science). What you can do on a DataFrame is very similar to a query in SQL. YOU CAN GROUP, JOIN, PIVOT1, etc. Dataframes can be thought of as an abstraction of Vec<Series> , with each column corresponding to a Series. Series only holds Arc<dyn SeriesTrait>. The ChunkedArray<T> type implements SeriesTrait. Series is a column data representation of the obscure type of Polars. Some operations that are not related to data types are provided by Series and SeriesTrait, such as indexing and renaming operations. Operations related to data types must be downgraded to ChunkedArray<T>, the underlying data structure of the Series. ChunkedArray<T>, which is a chunked array, is similar to Vec<dyn ArrowArray> at the bottom layer, and chunking is conducive to parallel operation of data. This is the underlying data structure of Polars and implements a number of operations. Most operations are defined in chunked_array::ops or implemented on a ChunkedArray structure.

In data processing, "pivot" refers to pivot. Used to convert long-form data to wide-format data.

Indexing

Used to query an element or subset of a Series or Dataframe or lazyframe.

There are four main indexing kinds in Polars:

Types | API | Meaning |

|---|---|---|

| Get one Item of Series | .get method | Get a single element |

| Integer index | .take method | Given a number of integer index values, a subset of Series/Dataframe/lazyframe is returned for rows or columns |

| Name index | .select and .column method | Given the column name, a subset of Dataframe/lazyframe is returned. |

| Slice index | .slice method | Given a slice, a subset within the slice range is returned; |

| bool index | .filter method | Given a bool array as an index, it must be consistent with the number of container elements, and return a subset of elements corresponding to true. |

The API is also designed according to the above 4 types. See Indexing Series,Get one item of Series, Indexing dataframe, Indexing LazyFrame

表达式

Polars has a powerful concept called expressions (Expr types). Polars expressions can be used in a variety of contexts, essentially executing Fn(Series) -> Series. Expr takes the Series as input and the Series as the output. So Expr can be chained.

#![allow(unused)] fn main() { col("foo").sort().head(2) }

The above snippet represents selecting the column "foo", then sorting this column, and then taking the first two values of the sort output. The power of expressions is that each expression produces a new expression, and they can be chained or saved into a variable or passed as an argument. You can run expressions through the execution context of polars. Here we run two expressions in the select context:

#![allow(unused)] fn main() { df.lazy() .select([ col("foo").sort(Default::default()).head(None), col("bar").filter(col("foo").eq(lit(1))).sum(), ]) .collect()?; }

Each individual Polars expression can be run independently without the result of any other expression or without interacting with other expressions. As a result, Polars may assign expressions to different threads or processors to execute at the same time. Polars expressions are highly parallel. Understanding Polars expressions is a crucial step in learning Polars.

Context

Functions that accept Expressions are called contexts, and there are three types of functions:

| Meaning | Code |

|---|---|

| Select context | df.select([..]) |

| group/agg context | df.groupby(..).agg([..]) |

| Stack horizontally (hstack) or add columns | df.with_columns([..]) |

Data Type

Polars internally uses the Arrow data type. The Arrow data type is part of the Apache Arrow project that defines a cross-platform, language-agnostic data format. This data format enables efficient data exchange between different systems and languages without the need for data serialization and deserialization. Arrow datatypes include many common data types, such as integers, floating-point numbers, strings, dates, and times. Complex data structures String, Categorical, and Object are also supported.

Group | Types | Comment |

|---|---|---|

| Number type | Int8 | 8-bit signed integer. |

| Number type | Int16 | 16-bit signed integer. |

| Number type | Int32 | 32-bit signed integer. |

| Number type | Int64 | 64-bit signed integer. |

| Number type | UInt8 | 8-bit unsigned integer. |

| Number type | UInt16 | 16-bit unsigned integer. |

| Number type | UInt32 | 32-bit unsigned integer. |

| Number type | UInt64 | 64-bit unsigned integer. |

| Number type | Float32 | 32-bit floating point. |

| Number type | Float64 | 64-bit floating point. |

| Nested types | Struct | A struct type similar to Vec<Series> can be used to encapsulate multiple columns of data in a single column. The columns do not have to be of the same type. |

| Embedding type | List | The list type is the Arrow LargeList type at the bottom. |

| Time type | Date | Date type, the underlying i32 type stores the number of days since 00:00:00 UTC1 on January 1, 1970. The date range is approximately from 5877641 BC to 5877641 AD. |

| Time type | Datetime(TimeUnit, Option<PlSmallStr>) | Datetime type. The first parameter is the unit, and the second parameter is the time zone. Usually defined as Datetime(TimeUnit::Milliseconds,None); The underlying layer is stored in i64 type milliseconds from 00:00:00 UTC on January 1, 19701. The time range is approximately from about 292,469,238 B.C. to 292,473,178 A.D. In practice, it is perfectly sufficient to use i64 types to store timestamps in milliseconds. |

| Time type | Duration(TimeUnit) | Poor storage time. Duration(TimeUnit::Milliseconds) is the return type of the Date/Datetime subtraction operation. |

| Time type | Time | The type of time, which stores nanoseconds starting at 0:00 of the day. |

| other | Boolean | Boolean, internally stored in bits. |

| other | String | String type, the underlying layer is LargeUtf8 |

| other | Binary | Arbitrary binary data. |

| other | Object | A limited supported data type that can be any value. |

| other | Categorical | Categorical variables. Similar to R factor. Crate feature dtype-categorical only |

For a detailed Arrow type description, see:https://arrow.apache.org/docs/format/Columnar.html

This day (January 1, 1970 00:00:00 UTC) is known as the UNIX epoch

Float32 and Float64 comply with the IEEE 754 standard, with the following caveats:

Polars requirements operation cannot rely on the positivity or negativity of 0 or NaN, nor does it guarantee a payload with NaN values. This is not limited to arithmetic operations. All zeros are normalized to +0 and all NaN to positive NaN without payload before the sorting and grouping operation for a valid equation check: NaN and NaN comparisons are considered equal. NaN is larger than all non-NaN.

In the IEEE 754 floating-point number standard, 0 and NaN (non-numeric) are signed, which means that there are +0 and -0, positive NaN and negative NaN. Positive and negative zeros are numerically equal, but in some calculations, such as division or the limits of math functions, positive and negative zeros may behave differently. In the IEEE floating-point standard, NaN (non-numeric) is a binary representation with a "payload" section. He refers to the part other than the sign and exponential bits. This section can store additional information. For example, if the result of a mathematical operation is undefined, this information can be stored in the NaN payload. However, most often, this payload is not used, so in many operations, the handling of the payload of NaN is not clearly defined.

Data type conversion

Series underlying type conversion

Series itself is dynamically typed. He points to the underlying ChunkedArray<T> by a reference. Where T is the underlying data type.

#![allow(unused)] fn main() { Series::cast(&self, dtype: &DataType) -> Result<Series, PolarsError> }

Type conversion by Expressions

Numeric types are converted to each other

Converting numeric types of different capacities to each other can experience overflow issues. By default, an error is thrown.

#![allow(unused)] fn main() { col("integers").cast(DataType::Boolean).alias("i2bool")//Numeric to bool, 0 is flase, non-zero is true. col("floats").cast(DataType::Boolean).alias("f2bool")//Numeric to bool, 0 is flase, non-zero is true. bool and numeric are allowed to convert to each other. However, transformation from string to bool is not allowed. }

Strings are converted to Numbers

#![allow(unused)] fn main() { col("floats_as_string").cast(DataType::Float64) //Converts a string to a numeric value through a transformation operation, throwing a runtime error if a non-number occurs. }

Numbers are converted to strings

#![allow(unused)] fn main() { col("integers").cast(DataType::String) col("float").cast(DataType::String) }

The string is parsed to a datetime

#![allow(unused)] fn main() { let mut opt = StrptimeOptions::default(); opt.format=Some("%Y-%m-%d %H:%M:%S".to_owned()); //Default value is None, polars can recognize standard date and time such as: //"2024-09-20 00:44:00+08:00" by default, time zone can be omitted. If your data // is in standard datetime format, then you don't need to set this parameter. col("datetimestr").str().to_datetime(None,None,opt,lit("raise")) }

The to_datatime parameters are Units, Time Zone, Resolution Parameters, and Ambiguity. Many parts of the world use daylight saving time, which turns on and moves the local time forward by 1 hour and back at the end of daylight saving time. Dialing 1 hour into the future will cause a time period to not exist, and dialing back will cause a time period to appear twice. When a certain time value appears in a time period that should not exist, that is, when the ambiguity processing parameter comes into play, it is set to lit("raise"), and an error is reported when the ambiguous time occurs. An important lesson is that the storage of dates and times must contain time zones, such as "2024-09-20 0:44:00+08:00", and the time zone information can be used to represent the time correctly. In cases where the time zone is omitted, the time zone defaults to UTC. This correctness is not only in the local region, but also in the world, where the data can be correctly calculated and compared.

Datetime to string

This is datetime formating.

#![allow(unused)] fn main() { col("datetime").dt().to_string("Data: %Y-%m-%d, Time: %H:%M:%S") }

Categorical variables

Categorical variables are used to optimize string handling. When data is stored, there are many cases where strings are used to represent categories, such as city, gender, ethnicity, species, and so on. However, after loading into memory, a large number of duplicate strings take up unnecessary resources, and string comparison operations are also very time-consuming. Categorical variables are used to solve the above problems:

| String column | Categorical columns | - |

|---|---|---|

| Series | Type ID | Type comparison |

| polar bear | 0 | polar bear |

| panda | 1 | panda |

| brown bear | 2 | brown bear |

| panda | 1 | |

| brown bear | 2 | |

| brown bear | 2 | |

| polar bear | 0 | |

| … | … |

After the conversion of the string column -> Categorical, we only need to store the type ID and the type comparison table. This saves a lot of memory and also speeds up == operations. The benefit of this encoding is that the string value is stored only once. In addition, when we perform operations such as sorting, counting, we can compare IDs, which is much faster than processing string data. Polars supports processing categorical data with two different data types: Enum and Categorical. When an Enum is used, the number of class of Enum (Note: Not items of Series) is fixed, an element that does not belong to the Enum is considered to be a data error. The number of categories is not fixed, then with Categorical, new types will be silently added to Categorical. If your needs change in the process, you can always switch from one to the other.

There are two types of sorting of categorical variables, one is based on the numerical order of type IDs, and the other is based on string order. The difference is specified when building the type:

#![allow(unused)] fn main() { DataType::Categorical(None, CategoricalOrdering::Physical) //ID order DataType::Categorical(None, CategoricalOrdering::Lexical) //Lexical order }

Exp:

#![allow(unused)] fn main() { //Creating a Series of type Categorical let s= Series::new("字段名", vec!["option1","option2","option3","option4"]) .cast(DataType::Categorical(None, CategoricalOrdering::Physical)); }

Null, None, and NaN

In Polars, missing data is represented by Null because Polars adheres to the data specifications of the Apache Arrow project. In Rust, Option::None is used to represent missing data. So that a None value in Rust will present as null in Polars.

#![allow(unused)] fn main() { let df = df! ( "value" => &[Some(1), None], )?; println!("{}", &df); }

The output:

shape: (2, 1)

┌───────┐

│ value │

│ --- │

│ i64 │

╞═══════╡

│ 1 │

│ null │

└───────┘

For floating-point numbers, there is also NaN (Not a Number), which is a special number usually resulting from erroneous mathematical operations. NaN is a special value of the float type and is not used to represent missing data. This means that null_count() only counts null values and does not include NaN values. The functions fill_nan and fill_null are used to fill NaN and null values, respectively.

| Meaning | Expression | Return Value |

|---|---|---|

| Zero divided by zero | 0/0 | NaN |

| Square root of a negative number | (-1f32).sqrt() | NaN |

| Certain operations involving infinity let inf=std::f32::INFINITY | inf*0 inf/inf | NaN |

| All mathematical operations involving NaN | NaN+1 NaN*1 | NaN |

缺失数据的处理

| 处理方案 | 示例代码 |

|---|---|

| 填充常数 | col("col2").fill_null(lit(2)) |

| 填充表达式值 | col("col2").fill_null(median("col2")) |

| 填充前一个值 | col("col2").forward_fill(None) |

| 插值 | col("col2").interpolate(InterpolationMethod::Linear) |

This chapter systematically introduces the data analysis process and related concepts, particularly the operations of IO, DataFrame, and LazyFrame.

Sample Data

Some data that might be used in this book is compiled here.

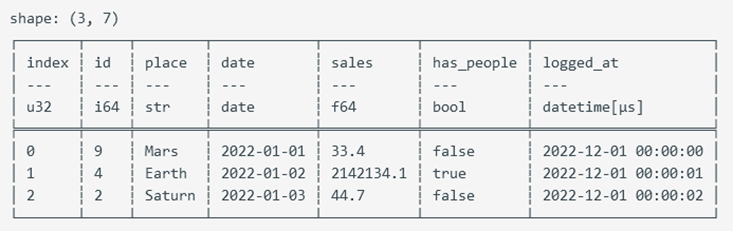

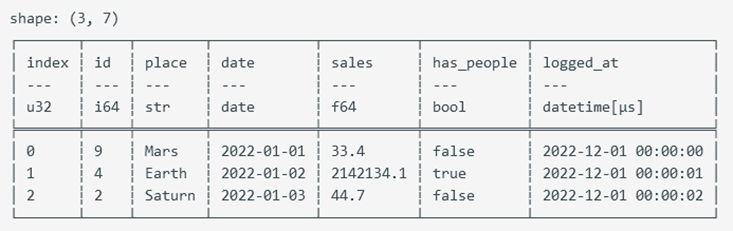

#![allow(unused)] fn main() { let mut employee_df: DataFrame = df!( "Name" => ["Lao Li", "Lao Li", "Lao Li", "Lao Li", "Lao Zhang", "Lao Zhang", "Lao Zhang", "Lao Zhang", "Lao Wang", "Lao Wang", "Lao Wang", "Lao Wang"], "Employee ID" => ["Employee01", "Employee01", "Employee01", "Employee01", "Employee02", "Employee02", "Employee02", "Employee02", "Employee03", "Employee03", "Employee03", "Employee03"], "Date" => ["August", "September", "October", "November", "August", "September", "October", "November", "August", "September", "October", "November"], "Performance" => [83, 24, 86, 74, 89, 59, 48, 79, 51, 71, 44, 90] )?; use polars::prelude::*; use chrono::prelude::*; use polars::prelude::prelude::date_range; let mut types_df = df!( "id" => &[9, 4, 2], "place" => &["Mars", "Earth", "Saturn"], "date" => date_range("date".into(), NaiveDate::from_ymd_opt(2022, 1, 1).unwrap().and_hms_opt(0, 0, 0).unwrap(), NaiveDate::from_ymd_opt(2022, 1, 3).unwrap().and_hms_opt(0, 0, 0).unwrap(), Duration::parse("1d"), ClosedWindow::Both, TimeUnit::Milliseconds, None)?, "sales" => &[33.4, 2142134.1, 44.7], "has_people" => &[false, true, false], "logged_at" => date_range("logged_at".into(), NaiveDate::from_ymd_opt(2022, 1, 1).unwrap().and_hms_opt(0, 0, 0).unwrap(), NaiveDate::from_ymd_opt(2022, 1, 1).unwrap().and_hms_opt(0, 0, 2).unwrap(), Duration::parse("1s"), ClosedWindow::Both, TimeUnit::Milliseconds, None)?, )? .with_row_index("index".into(), None)?; let salary_df = df![ "Category" => ["Development", "Development", "Development", "Development", "Development", "Intern", "Intern", "Sales", "Sales", "Sales"], "Employee ID" => [11, 7, 9, 8, 10, 5, 2, 3, 1, 4], "Salary" => [5200, 4200, 4500, 6000, 5200, 3500, 3900, 4800, 5000, 4800], ]?; }

IO

This chapter introduces how to read and write data from and to common formats such as CSV, Parquet, and JSON.

Creating a DataFrame from CSV

#![allow(unused)] fn main() { // Requires the polars-io feature to create a DataFrame from a CSV file use polars::prelude::*; // Loads into memory immediately. If the CSV content is large, consider using the lazy API let path = "E:\\myfile\\src\\pol\\input收治病人数据.csv"; let df = CsvReadOptions::default() .try_into_reader_with_file_path(Some(path.into())) .unwrap() .finish() .unwrap(); }

Creating a LazyFrame from CSV

This is used for reading CSV files in a lazy manner. The regular method of reading files loads the data into memory immediately, which can consume unnecessary resources if the CSV file is very large. The Lazy API defers the actual reading until the collect() method of the lazy DataFrame is called. Before calling the collect() function, you can set processing methods and computation expressions.

#![allow(unused)] fn main() { // Requires the polars-io feature to create a lazyframe from a CSV file use polars::prelude::*; let lazyreader = LazyCsvReader::new("./test.csv"); let lazyreader = LazyCsvReader::new_paths(&["./test0.csv", "./test1.csv"]); // Reading multiple files. let lf = lazyreader.finish()?; }

LazyCsvReader API

| API | Description |

|---|---|

| with_glob(toggle: bool) | Enables glob pattern matching for the path. |

| with_skip_rows_after_header(self, offset: usize) | Skips a number of rows after the header. |

| with_row_index(self, row_index: Option<RowIndex>) | Adds a row index after reading, starting from 0. RowIndex{name:"RowIndex", offset:0} |

| with_n_rows(num_rows: Option<usize>) | Reads only n rows; cannot guarantee exact n in multithreaded conditions. |

| with_skip_rows(n: usize) | Skips n rows; the header starts from row index n. |

| with_has_header(has_header: bool) | Indicates whether there is a header row. |

| with_separator(separator: u8) | Default field separator. |

| with_comment_prefix(comment_prefix: Option<&str>) | Comment marker; lines starting with comment_prefix are ignored as comments. |

| with_quote_char(quote_char: Option<u8>) | String quote marker, e.g., b'"'. |

| with_eol_char(eol_char: u8) | End-of-line character, e.g., b'\n'. |

| with_null_values(null_values: Option<NullValues>) | Sets strings recognized as null values. |

| with_encoding(CsvEncoding::Utf8) | Sets the character encoding. |

| finish() | Obtains the final lazyframe. |

Schema

In Polars, a schema refers to the structure of a data table, including detailed information about field names and field types. Typically, when loading a CSV, Polars can automatically infer data types. However, there are occasions when this may not meet your needs, and you need to specify types manually, such as when parsing dates and times. In the .with_dtype_overwrite call, you don't need to specify information for all fields; unspecified fields will still be automatically inferred. For available data types, see Data Types.

#![allow(unused)] fn main() { // This code demonstrates how to specify the types for certain fields in a CSV. use polars::prelude::*; use std::fs::File; let mut schema = Schema::default(); schema.insert("col1".into(), DataType::String); schema.insert("col2".into(), DataType::Datetime(TimeUnit::Milliseconds, None)); // Polars can automatically recognize standard time formats like "2024-09-20 00:44:00+08:00", where +08:00 indicates the timezone. If the timezone part is omitted, it defaults to UTC. schema.insert("col3".into(), DataType::Categorical(None, CategoricalOrdering::Physical)); let lazyreader = LazyCsvReader::new("E:\\data.csv") .with_has_header(true) .with_dtype_overwrite(Some(schema.into())); // Set field types based on column names. .finish()?; }

Writing to a CSV File

DataFrame to File

#![allow(unused)] fn main() { // Write to a CSV file let mut file = File::create("docs/data/output.csv").expect("could not create file"); CsvWriter::new(&mut file).finish(&mut employee_df)?; }

LazyFrame to File

#![allow(unused)] fn main() { let mut opt = CsvWriterOptions::default(); opt.maintain_order = true; // Data is processed in parallel; if this option is not enabled, the order of rows in the file cannot be guaranteed. opt.include_bom = true; // Add BOM. If BOM is not added, Excel may display garbled text; some programs do not support UTF-8 BOM, which can also lead to garbled text. df1.lazy().sink_csv("d:/out.csv", opt)?; }

BOM stands for Byte Order Mark. It is intended for UTF-16 and UTF-32 to indicate byte order. UTF-16 and UTF-32 process data in units of 2 and 4 bytes, respectively, which requires consideration of endianness (big-endian or little-endian). UTF-8 processes data in units of 1 byte and is not affected by CPU endianness; therefore, UTF-8 does not need a BOM to indicate byte order. A UTF-8 without a BOM is the standard format. However, Microsoft uses BOM to indicate encoding, so a UTF-8 CSV format without a BOM may display garbled text in Excel, as Excel defaults to a different encoding. UTF-8 with a BOM can also have compatibility issues, as some programs do not recognize BOM, leading to UTF-8 garbled text.

Building a DataFrame from Parquet Format

Apache Parquet is an open-source columnar storage data file format designed for efficient data storage and retrieval. It offers efficient data compression and encoding schemes, capable of handling large and complex datasets. Additionally, it supports multiple programming languages. Compared to the simple CSV format, Parquet has significant advantages when storing and processing large datasets:

- Storage Efficiency: Parquet files are much smaller than CSV files and support various data compression methods.

- Query Performance: The import and query speed of Parquet is much higher than that of CSV, especially when dealing with large datasets.

- Self-Describing: Parquet files contain metadata about the data structure, including detailed information about fields and field types.

- Compatibility and Performance: Parquet is compatible with many data processing frameworks, such as Hadoop and Spark.

#![allow(unused)] fn main() { // This code demonstrates how to load a Parquet format file use std::fs::File; use polars::prelude::*; let mut input_file = File::open("d:/output.parquet")?; let df = ParquetReader::new(&mut input_file).finish()?; println!("{}", df); }

Building a LazyFrame from Parquet Format

#![allow(unused)] fn main() { let mut opt = ScanArgsParquet::default(); opt.n_rows = None; // Default is None, meaning all rows are read. Some(100) would read only 100 rows. opt.row_index = Some(RowIndex { name: "RowIndex".into(), offset: 0 }); // Adds a row index as the first column, with the column name "RowIndex". Default is None, meaning no row index is added. let lf = LazyFrame::scan_parquet("d:/output.parquet", opt)?; println!("{}", lf.collect()?); }

Writing to Parquet Format

DataFrame to File

#![allow(unused)] fn main() { use std::fs::File; use polars::prelude::*; let mut file = File::create("d:/output.parquet").expect("could not create file"); ParquetWriter::new(&mut file) .with_compression(ParquetCompression::Zstd(None)) .finish(&mut employee_df)?; }

LazyFrame to File

#![allow(unused)] fn main() { let mut opt = ParquetWriteOptions { compression: ParquetCompression::Zstd(None), // Enable compression maintain_order: true, // Maintain row order; by default, multithreaded operations cannot guarantee row order ..Default::default() }; employee_df.lazy().sink_parquet("D:/output.parquet", opt)?; }

Creating a DataFrame from JSON

JSON supports two formats:

| Type | Description |

|---|---|

| JsonFormat::Json | Indicates that the entire file contains an array, where the array's content consists of individual JSON objects. |

| JsonFormat::JsonLines | Each line contains a single JSON object. |

Json

Read Json file.

#![allow(unused)] fn main() { use polars::prelude::*; // One JSON array stored in the entire file let json_array = r#" [ { "json_a": 1, "b": 2, "c": 3}, { "json_a": 21, "b": 22, "c": 23}, { "json_a": 31, "b": 32, "c": 33} ]"#; let buf = Cursor::new(json_array); // Any type implementing the Read trait can be used as input let df = JsonReader::new(buf) .with_json_format(JsonFormat::Json) .finish()?; }

JsonLines

Read JsonLines file.

#![allow(unused)] fn main() { // One JSON object per line let json_lines = r#" {"jsonlines_a": 1,"b": 2,"c": 3} {"jsonlines_a": 21,"b": 22,"c": 23} {"jsonlines_a": 31,"b": 32,"c": 33}"#; let buf1 = Cursor::new(json_lines); // Any type implementing the Read trait can be used as input let df1 = JsonReader::new(buf1) .with_json_format(JsonFormat::JsonLines) .finish()?; println!("{}\n{}", df, df1); }

Creating a LazyFrame from JSON

This is used for reading JSON line files in a lazy manner. The regular method of reading files loads the data into memory immediately, which can consume unnecessary resources if the JSON file is very large. The Lazy API defers the actual reading until the collect() method of the LazyFrame is called. Before calling the collect() function, you can set processing methods and computation expressions.

#![allow(unused)] fn main() { // Requires the polars-io feature to create a lazyframe from a JSON file use polars::prelude::*; let lazyreader = LazyJsonLineReader::new("./test.csv"); let lazyreader = LazyJsonLineReader::new_paths(&["./test0.csv", "./test1.csv"]); // Reading multiple files. let lf = lazyreader.finish()?; }

LazyJsonLineReader API

| API | Description |

|---|---|

| with_row_index(self, row_index: Option<RowIndex>) | Adds a row index after reading, starting from 0. RowIndex{name:"RowIndex", offset:0} |

| with_n_rows(num_rows: Option<usize>) | Reads only n rows; cannot guarantee exact n in multithreaded conditions. |

| with_schema_overwrite(self, schema_overwrite) | Sets the type for certain fields. |

| finish() | Obtains the final lazyframe. |

Writing to JSON

DataFrame to File

JSON files can be in two formats: JsonFormat::Json and JsonFormat::JsonLines. For more details, see Creating a DataFrame from JSON.md.

#![allow(unused)] fn main() { // Write to a JSON file let mut file = File::create("docs/data/output.json").expect("could not create file"); JsonWriter::new(&mut file) .with_json_format(JsonFormat::Json) // JsonFormat::Json or JsonFormat::JsonLines .finish(&mut employee_df)?; }

LazyFrame to File

#![allow(unused)] fn main() { let mut opt = JsonWriterOptions::default(); opt.maintain_order = true; // Data is processed in parallel; if this option is not enabled, the order of rows in the file cannot be guaranteed. employee_df.lazy().sink_json("d:/out.json", opt)?; // The default format is JsonLines, and it cannot currently be set to Json format. }

Series

A Series is a data structure used to store fields, containing all elements of a field with the requirement that all elements are of the same type. Essentially, a Series is a ChunkedArray, which is an array stored in chunks. In Polars, data within a Series is stored in the form of chunks, where each chunk is an independent array. This design enhances data processing efficiency, especially during large-scale data operations, as chunked data can be processed in parallel.

Constructing a Series

A Series is a data structure used to store fields, containing all elements of a field, with the requirement that all elements are of the same type. Essentially, a Series is a ChunkedArray, which is an array stored in chunks. In Polars, data within a Series is stored in the form of chunks, where each chunk is an independent array. This design enhances data processing efficiency, especially during large-scale data operations, as chunked data can be processed in parallel.

Here's how you can construct a Series:

#![allow(unused)] fn main() { use polars::prelude::*; let s = Series::new("field_name", vec![0i32, 2, 1, 3, 8]); }

In this example, a Series named "field_name" is created, containing integer elements.

Indexing a Series

Slice Indexing with series.slice(idx, len)

The returned Series is a view of self and does not copy the data. If _offset is negative, it counts from the end of the array.

Function declaration:

fn slice(&self, _offset: i64, _length: usize) -> Series

Example:

#![allow(unused)] fn main() { let s = Series::new("field_name", [0i32, 1, 8]); let s2 = s.slice(2, 4); }

Indexing by Position

series.take_slice(&[u32])

Use take_slice when the index array is stored in a slice.

#![allow(unused)] fn main() { let s = Series::new("foo".into(), [1i32, 2, 4, 5, 7, 3]); let s2 = s.take_slice(&[1, 3, 5]); // s2 == Series[2, 5, 3] }

series.take(&IdxCa)

Use the take method when indices are stored in the IdxCa type. IdxCa is an alias for ChunkedArray<UInt32Type>.

#![allow(unused)] fn main() { let s = Series::new("foo".into(), [1i32, 2, 4, 5, 7, 3]); let idx0: IdxCa = IdxCa::from_vec("index".into(), vec![1, 3, 4]); let res0 = s.take(&idx0).unwrap(); println!("{}", res0); }

Logical Indexing

series.filter(bool_idxs)

bool_idx is a logical index, essentially an array of boolean values. Elements corresponding to true in bool_idx are copied from the series and returned. The implicit requirement is that len(bool_idx) == len(series). bool_idx is of type BooleanChunked, an alias for ChunkedArray. The series defines boolean operation functions, supporting comparisons between a Series and a single value, as well as between two Series.

#![allow(unused)] fn main() { let s1 = Series::new("field_name1", vec![0i32, 1, 2, 5, 8]); let s2 = Series::new("field_name2", vec![2i32, -1, 3, -5, 8]); let idx = s1.gt(&s2).unwrap() & s1.lt_eq(5).unwrap(); let s2 = s1.filter(&idx).unwrap(); }

Logical Operations

In series.filter(bool_idxs), bool_idxs is of type BooleanChunked, which can be returned by logical operations.

| Series Comparison Methods | Meaning |

|---|---|

| .gt(&Series) | > |

| .gt_eq(&Series) | >= |

| .equal() | == |

| .equal_missing() | == |

| .not_equal() | != |

| .not_equal_missing() | != |

| .lt() | < |

| .lt_eq() | <= |

| .is_nan() | Is NaN |

| .is_not_nan() | Is not NaN |

| .is_empty() | Is empty, series.len() == 0 |

| .is_finite() | Is finite |

| .is_infinite() | Is infinite |

| .none_to_nan(&self) | Converts missing values to NaN |

| .is_null() | Is null, indicating missing values |

| .is_not_null() | Is not null |

The *_missing series of functions are used to compare two Series or ChunkedArray for equality, taking into account possible missing values (i.e., None or NaN). In many cases, directly comparing two Series or ChunkedArray containing missing values may yield inaccurate results because None or NaN is not equal to any value, including themselves. The equal_missing function provides a way to handle this situation by treating elements with missing values at the same position as equal.

Reading a Single Element

To index into a Series, you must first cast it to a ChunkedArray<T> of the specified type:

#![allow(unused)] fn main() { let s = Series::new("field_name", [0i32, 1, 8]); let item = s.i32().unwrap().get(2).unwrap(); }

If you need to process elements in a Series within a loop, the best practice is to use functions like series.i32() to extract a reference to the underlying ChunkedArray<T>. This does not copy the data. You can then perform operations on this ChunkedArray.

Available casting functions include: i8() i16() i32() i64() f32() f64() u8() u16() u32() u64() bool() str() binary() decimal() list(). These functions extract a reference to the underlying ChunkedArray<T> of the Series. You must ensure that the casting function you call matches the underlying type T, otherwise a runtime error will occur. To change the underlying type T, use the Series::cast(&DataType::Int64) function, which converts the type of the underlying ChunkedArray<T> and returns a new Series.

Iterating Over Values

A Series is a dynamically typed variable. If the type is unknown during programming, you must use the Series:iter() iterator, which returns values wrapped in AnyValue. This wrapping and unwrapping can cause performance loss. Try to avoid APIs that return AnyValue.

Iterating Over AnyValue

#![allow(unused)] fn main() { let s = Series::new("foo".into(), [1i32, 2, 3]); let s_squared: Series = s.iter() .map(|opt_v| { match opt_v { AnyValue::Int32(x) => Some(x * x), // You can add handling logic for different types. _ => None, } }).collect(); }

Iterating Over Type Known

If the underlying type is known, you can use downcasting functions like i8() i16() i32() i64() f32() f64() u8() u16() u32() u64() bool() str() binary() decimal() list() to extract a reference to the underlying ChunkedArray<T> of the Series. The type casting operation only converts the reference to the underlying ChunkedArray<T> and does not copy the data. Additionally, you need to ensure that the casting function you call matches the underlying type T, otherwise a runtime error will occur. s.i32()?.iter() generates the corresponding iterator.

#![allow(unused)] fn main() { let s = Series::new("foo", [1i32, 2, 3]); let s_squared: Series = s.i32()?.iter() .map(|opt_v| { match opt_v { Some(v) => Some(v * v), None => None, // null value } }).collect(); }

Arithmetic Operations

Polars defines arithmetic operations such as addition, subtraction, multiplication, and division. Here's how you can use them:

#![allow(unused)] fn main() { let s = Series::new("a", [1, 2, 3]); let out_add = &s + &s; let out_sub = &s - &s; let out_div = &s / &s; let out_mul = &s * &s; // Supports operations between Series and Series, as well as Series and scalar values let s: Series = (1..3).collect(); let out_add_one = &s + 1; let out_multiply = &s * 10; let out_divide = 1.div(&s); // Division let out_add = 1.add(&s); // Addition let out_subtract = 1.sub(&s); // Subtraction let out_multiply = 1.mul(&s); // Multiplication }

In this example, you can see how to perform arithmetic operations both between two Series and between a Series and a scalar value.

Common Series API

| Method | Description |

|---|---|

series.len() | Returns the length of the Series. |

series.name() | Returns the name of the Series. |

series.rename(&str) | Renames the Series. |

series.rechunk() | A Series is essentially an array divided into chunks, which aids in parallel computation. However, after multiple indexing operations, the chunks can become fragmented, affecting computational efficiency. rechunk merges adjacent chunks to improve efficiency. |

series.cast(&DataType::Int64) | Converts the internal data type of the Series and returns a new Series. |

series.chunks() | Returns the chunks of the Series. |

series.chunk_lengths | Returns an iterator over the lengths of the chunks. |

series.get(u32) | Returns the value at the specified index as an AnyValue. |

Dataframe

Polars is a high-performance data processing library designed for fast analysis of large-scale data. Its DataFrame is a two-dimensional tabular data structure similar to Pandas, but optimized for performance and memory management.

Features of Polars DataFrame:

- Columnar Storage: Polars uses columnar storage, which allows for more efficient data reading and processing, especially suitable for large datasets.

- Parallel Processing: Polars utilizes multi-core CPUs for parallel computation, significantly enhancing data processing speed.

- Strong Type System: Polars checks data types at compile time, reducing runtime errors.

- Rich Functionality: Offers a variety of data processing functions, including filtering, aggregation, joining, and transformation.

Polars DataFrame provides data scientists and analysts with an efficient and flexible data processing tool, particularly well-suited for handling large-scale datasets.

Constructing a DataFrame

Empty DataFrame

#![allow(unused)] fn main() { use polars::prelude::*; let df = DataFrame::default(); }

Creating a DataFrame from a Macro

#![allow(unused)] fn main() { use polars::prelude::*; let mut arr = [0f64; 5]; let v = vec![1, 2, 3, 4, 5]; // df macro support let df = df! ( "nrs" => &[Some(1), Some(2), Some(3), None, Some(5)], // Direct literals, use None to represent null. "names" => &["A", "A", "B", "C", "B"], // Direct literals "col3" => &arr, // Rust array "groups" => &v, // Generated from Vec )?; println!("{}", &df); }

Creating a DataFrame from Vec<Series>

#![allow(unused)] fn main() { use polars::prelude::*; let s1 = Series::new("Fruit".into(), ["Apple", "Apple", "Pear"]); let s2 = Series::new("Color".into(), ["Red", "Yellow", "Green"]); // s1 and s2 must have the same length. let df: PolarsResult<DataFrame> = DataFrame::new(vec![s1, s2]); }

Indexing a DataFrame

Column Indexing

#![allow(unused)] fn main() { let res: &Series = employee_df.column("Employee")?; // Returns a reference to a Series based on a single column name let res: &Series = employee_df.select_at_idx(1)?; // Returns a reference to a Series based on an index let res: DataFrame = employee_df.select(["Category", "Salary"])?; // Copies the specified fields and returns a new DataFrame let sv: Vec<&Series> = employee_df.columns(["Category", "Salary"])?; // Returns references to Series based on multiple column names let res: &[Series] = employee_df.get_columns()?; // Returns a slice of all columns, pointing to the internal data structure of the DataFrame without copying data let res: Vec<Series> = employee_df.take_columns(); // Takes ownership of all columns }

Row Indexing

Boolean Indexing

#![allow(unused)] fn main() { let se = employee_df.column("Performance")?; let mask = se.gt_eq(80)?; // Checks if se is greater than or equal to 80. Returns a Boolean array. let res: DataFrame = employee_df.filter(&mask)?; }

Slice Indexing

#![allow(unused)] fn main() { let res: DataFrame = employee_df.slice(5, 3); }

Index Values

#![allow(unused)] fn main() { let idx = IdxCa::new("idx".into(), [0, 1, 9]); let res: DataFrame = employee_df.take(&idx)?; // Returns rows with indices 0, 1, and 9 }

Grouping and Simple Aggregation

LazyFrame is designed to be more user-friendly: it is recommended to convert a DataFrame to a LazyFrame using dataframe.lazy() before performing aggregation.

| Term | Meaning |

|---|---|

| Simple Aggregation | Input several data points and return a single value based on a certain algorithm. For example, min, max, mean, head, tail, etc., are all aggregation functions. |

| Grouping | As the name suggests, it involves grouping data based on specified fields, and subsequent aggregation operations will call the aggregation function once for each group. |

Practical Example 1

Observe the sample data employee_df, which contains the performance of 3 employees over the past 4 months.

#![allow(unused)] fn main() { let mut employee_df: DataFrame = df!( "Name" => ["Lao Li", "Lao Li", "Lao Li", "Lao Li", "Lao Zhang", "Lao Zhang", "Lao Zhang", "Lao Zhang", "Lao Wang", "Lao Wang", "Lao Wang", "Lao Wang"], "Employee ID" => ["Employee01", "Employee01", "Employee01", "Employee01", "Employee02", "Employee02", "Employee02", "Employee02", "Employee03", "Employee03", "Employee03", "Employee03"], "Date" => ["August", "September", "October", "November", "August", "September", "October", "November", "August", "September", "October", "November"], "Performance" => [83, 24, 86, 74, 89, 59, 48, 79, 51, 71, 44, 90] )?; }

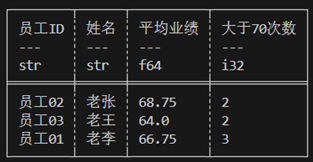

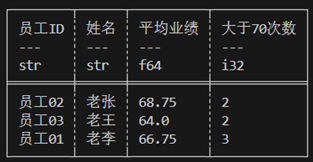

The current requirement is to calculate the average performance for each person across all months and count the number of times each person's performance exceeded 70. The data needs to be grouped by Employee ID and then aggregated.

#![allow(unused)] fn main() { let res = employee_df.lazy().group_by(["Employee ID", "Name"]) // group_by may disrupt row order; group_by_stable can preserve the original row order. .agg([ col("Performance").mean().alias("Average Performance"), col("Performance").gt(70).cast(DataType::Int32).sum().alias("Count Greater Than 70") ]).collect()?; println!("{}", res); }

Polars provides aggregation functions through expressions to accomplish simple aggregation.

shape: (3, 4)

┌─────────────┬───────────┬─────────────────────┬───────────────────────┐

│ Employee ID ┆ Name ┆ Average Performance ┆ Count Greater Than 70 │

│ --- ┆ --- ┆ --- ┆ --- │

│ str ┆ str ┆ f64 ┆ i32 │

╞═════════════╪═══════════╪═════════════════════╪═══════════════════════╡

│ Employee01 ┆ Lao Li ┆ 66.75 ┆ 3 │

│ Employee02 ┆ Lao Zhang ┆ 68.75 ┆ 2 │

│ Employee03 ┆ Lao Wang ┆ 64.0 ┆ 2 │

└─────────────┴───────────┴─────────────────────┴───────────────────────┘

Practical Example 2

Calculate the top two performers and their corresponding performances for each month.

#![allow(unused)] fn main() { let step1 = employee_df.lazy().group_by(["Date"]) // group_by may disrupt row order; group_by_stable can preserve the original row order. .agg([ col("Employee ID"), col("Performance"), col("Performance").rank(RankOptions::default(), None).alias("rank"), ]); let step2 =step1.clone().explode([col("Employee ID"), col("Performance"), col("rank")]); let step3 = step2.clone() .filter(col("rank").gt_eq(2)); println!("step1:\n{:?}\nstep2:{:?}\nstep3:\n{:?}",step1.collect(),step2.collect(),step3.collect()); }

Step 1

#![allow(unused)] fn main() { .group_by(["Date"]).agg([ col("Employee ID"), col("Performance"), col("Performance").rank(RankOptions::default(), None).alias("rank"), ]) }

The agg aggregation operation called in Practical Example 2 wraps multiple results within a group into a list.

shape: (4, 4)

┌───────────┬─────────────────────────────────┬──────────────┬───────────┐

│ Date ┆ Employee ID ┆ Performance ┆ rank │

│ --- ┆ --- ┆ --- ┆ --- │

│ str ┆ list[str] ┆ list[i32] ┆ list[u32] │

╞═══════════╪═════════════════════════════════╪══════════════╪═══════════╡

│ August ┆ ["Employee01", "Employee02", "… ┆ [83, 89, 51] ┆ [2, 3, 1] │

│ October ┆ ["Employee01", "Employee02", "… ┆ [86, 48, 44] ┆ [3, 2, 1] │

│ November ┆ ["Employee01", "Employee02", "… ┆ [74, 79, 90] ┆ [1, 2, 3] │

│ September ┆ ["Employee01", "Employee02", "… ┆ [24, 59, 71] ┆ [1, 2, 3] │

└───────────┴─────────────────────────────────┴──────────────┴───────────┘

Step 2

.explode([col("Employee ID"), col("Performance"), col("rank")])

This call unpacks the values wrapped in a list.

shape: (12, 4)

┌───────────┬─────────────┬─────────────┬──────┐

│ Date ┆ Employee ID ┆ Performance ┆ rank │

│ --- ┆ --- ┆ --- ┆ --- │

│ str ┆ str ┆ i32 ┆ u32 │

╞═══════════╪═════════════╪═════════════╪══════╡

│ September ┆ Employee01 ┆ 24 ┆ 1 │

│ September ┆ Employee02 ┆ 59 ┆ 2 │

│ September ┆ Employee03 ┆ 71 ┆ 3 │

│ November ┆ Employee01 ┆ 74 ┆ 1 │

│ November ┆ Employee02 ┆ 79 ┆ 2 │

│ … ┆ … ┆ … ┆ … │

│ October ┆ Employee02 ┆ 48 ┆ 2 │

│ October ┆ Employee03 ┆ 44 ┆ 1 │

│ August ┆ Employee01 ┆ 83 ┆ 2 │

│ August ┆ Employee02 ┆ 89 ┆ 3 │

│ August ┆ Employee03 ┆ 51 ┆ 1 │

└───────────┴─────────────┴─────────────┴──────┘

step3

#![allow(unused)] fn main() { .filter(col("rank").gt_eq(2)) }

Output:

shape: (8, 4)

┌───────────┬─────────────┬─────────────┬──────┐

│ Date ┆ Employee ID ┆ Performance ┆ rank │

│ --- ┆ --- ┆ --- ┆ --- │

│ str ┆ str ┆ i32 ┆ u32 │

╞═══════════╪═════════════╪═════════════╪══════╡

│ November ┆ Employee02 ┆ 79 ┆ 2 │

│ November ┆ Employee03 ┆ 90 ┆ 3 │

│ August ┆ Employee01 ┆ 83 ┆ 2 │

│ August ┆ Employee02 ┆ 89 ┆ 3 │

│ October ┆ Employee01 ┆ 86 ┆ 3 │

│ October ┆ Employee02 ┆ 48 ┆ 2 │

│ September ┆ Employee02 ┆ 59 ┆ 2 │

│ September ┆ Employee03 ┆ 71 ┆ 3 │

└───────────┴─────────────┴─────────────┴──────┘

Complex Aggregation and Custom Functions

- Simple Aggregation: Takes a single Series as input and outputs a Series with only one element.

- Complex Aggregation: Takes multiple Series as input and outputs multiple rows and columns.

Complex aggregation requires custom functions to compute the desired results.

DataFrame Complex Aggregation

DataFrame.group_by(["date"])?.apply(F) can be used to perform complex aggregation.

F is a custom function that must satisfy |x: DataFrame| -> Result<DataFrame, PolarsError>. The grouped data is encapsulated into a DataFrame, allowing access to all fields. The function returns a DataFrame, and each group can return multiple rows and columns, but the order, names, and types of fields in the returned DataFrame must be consistent across different groups. You need to maintain the group field in the returned DataFrame.

#![allow(unused)] fn main() { let mut employee_df: DataFrame = df!( "Name" => ["Lao Li", "Lao Li", "Lao Li", "Lao Li", "Lao Zhang", "Lao Zhang", "Lao Zhang", "Lao Zhang", "Lao Wang", "Lao Wang", "Lao Wang", "Lao Wang"], "employee_ID" => ["Employee01", "Employee01", "Employee01", "Employee01", "Employee02", "Employee02", "Employee02", "Employee02", "Employee03", "Employee03", "Employee03", "Employee03"], "date" => ["August", "September", "October", "November", "August", "September", "October", "November", "August", "September", "October", "November"], "score" => [83, 24, 86, 74, 89, 59, 48, 79, 51, 71, 44, 90] )?; let f = |x: DataFrame| -> Result<DataFrame, PolarsError> { let col1: &Series = x.column("Name")?; let col2: &Series = x.column("employee_ID")?; let col3: &Series = x.column("score")?; let group_id = x.column("date")?.str()?.get(0).unwrap(); // do something; We get those results below; let group_field = Series::new("group".into(), vec![group_id, group_id, group_id]); let res_field1 = Series::new("field1".into(), vec!["a1,1", "a2,1", "a3,1"]); let res_field2 = Series::new("field2".into(), vec!["a1,2", "a2,2", "a3,2"]); let res_field3 = Series::new("field3".into(), vec!["a1,3", "a2,3", "a3,3"]); let result = DataFrame::new(vec![group_field, res_field1, res_field2, res_field3])?; return Ok(result); }; let res = employee_df.group_by(["date"])?.apply(f)?; // The aggregation returns 3 rows and 3 columns. For different groups, the schema must be consistent (field order, field count, and type). println!("{}", res); }

Output

shape: (12, 4)

┌───────────┬────────┬────────┬────────┐

│ group ┆ field1 ┆ field2 ┆ field3 │

│ --- ┆ --- ┆ --- ┆ --- │

│ str ┆ str ┆ str ┆ str │

╞═══════════╪════════╪════════╪════════╡

│ August ┆ a1,1 ┆ a1,2 ┆ a1,3 │

│ August ┆ a2,1 ┆ a2,2 ┆ a2,3 │

│ August ┆ a3,1 ┆ a3,2 ┆ a3,3 │

│ November ┆ a1,1 ┆ a1,2 ┆ a1,3 │

│ November ┆ a2,1 ┆ a2,2 ┆ a2,3 │

│ … ┆ … ┆ … ┆ … │

│ September ┆ a2,1 ┆ a2,2 ┆ a2,3 │

│ September ┆ a3,1 ┆ a3,2 ┆ a3,3 │

│ October ┆ a1,1 ┆ a1,2 ┆ a1,3 │

│ October ┆ a2,1 ┆ a2,2 ┆ a2,3 │

│ October ┆ a3,1 ┆ a3,2 ┆ a3,3 │

└───────────┴────────┴────────┴────────┘

Join Operations

What is a join?

In data processing, the following requirement often arises: one table stores a large number of user IDs along with user names, ages, phone numbers, shopping addresses, and other user information, while another table stores user IDs along with items to be shipped, item prices, and other transaction information. How can we integrate the data from these two tables based on the ID?

The join operation is used to solve this problem. It combines two dataframes based on specified fields. A join requires two dataframes as input, referred to as the left table and the right table. Additionally, it is necessary to specify which fields are used as the basis for matching, known as "keys." All fields other than the keys are used as values, referred to as left table value fields and right table value fields, respectively. The result of a join operation includes: keys, left table value fields, and right table value fields.

There are four scenarios that can occur with a join:

- A key appears in the left table but not in the right table; the corresponding right table value fields for this key are considered null.

- A key appears in the right table but not in the left table; the corresponding left table value fields for this key are considered null.

- A key appears in both tables; the key is unique in at least one of the tables.

- A key appears in both tables; the key is not unique in either table. This results in a many-to-many join, which is often meaningless and usually indicates a data error that needs careful investigation. A key can be composed of multiple columns or a single column.

The main types of join operations are divided into five categories:

- Left Join (left_join): The result retains all keys from the left table, along with their corresponding left table value fields and right table value fields. Values corresponding to keys not appearing in the right table are considered null.

- Right Join (right_join): The result retains all keys from the right table, along with their corresponding left table value fields and right table value fields. Values corresponding to keys not appearing in the left table are considered null.

- Inner Join (inner_join): The result retains keys common to both the left and right tables, along with their corresponding left table value fields and right table value fields.

- Full Join (full_join): The result retains all keys from both the left and right tables, along with their corresponding left table value fields and right table value fields. Non-existent values are considered null.

- Cross Join (cross_join): A "Cartesian product" refers to all possible combinations of two datasets. For example, if the left table value fields for a certain key contain two rows, A and B, and the right table value fields contain two rows, 1 and 2, then the Cartesian join for this key will produce four rows: (A, 1), (A, 2), (B, 1), (B, 2).

Left join and right join are essentially the same, just swapping the left and right tables. Therefore, Polars only implements left_join. Dataframes and lazyframes have different syntax.

Join API

#![allow(unused)] fn main() { left_df.left_join(right_df, left_on, right_on) left_df.inner_join(right_df, left_on, right_on) left_df.full_join(right_df, left_on, right_on) left_df.cross_join(right_df, left_on, right_on) // Usage: left.full_join(right, ["join_column_left"], ["join_column_right"]) }

Exp:

The employee_score table stores employee performance data, while the employee_info table stores employee identity information. We will merge the two DataFrame using the employee_ID field as the key.

#![allow(unused)] fn main() { let mut employee_score: DataFrame = df!( "employee_ID" => ["Employee01", "Employee01", "Employee01", "Employee01", "Employee02", "Employee02", "Employee02", "Employee02", "Employee03", "Employee03", "Employee03", "Employee03"], "date" => ["August", "September", "October", "November", "August", "September", "October", "November", "August", "September", "October", "November"], "score" => [83, 24, 86, 74, 89, 59, 48, 79, 51, 71, 44, 90] )?; let mut employee_info = df!{ "Name" => ["Lao Li", "Lao Zhang", "Lao Wang"], "employee_ID" => ["Employee01", "Employee02", "Employee03"], "email" => ["zdl361@126.com","LaoZhang@gmail.com","LaoWang@hotmail.com"] }?; let res = employee_score.left_join(&employee_info, ["employee_ID"], ["employee_ID"])?; println!("employee_score:{}\nemployee_info:{}\nafter join:{}",employee_score,employee_info,res); }

Output

employee_score:shape: (12, 3)

┌─────────────┬───────────┬───────┐

│ employee_ID ┆ date ┆ score │

│ --- ┆ --- ┆ --- │

│ str ┆ str ┆ i32 │

╞═════════════╪═══════════╪═══════╡

│ Employee01 ┆ August ┆ 83 │

│ Employee01 ┆ September ┆ 24 │

│ Employee01 ┆ October ┆ 86 │

│ Employee01 ┆ November ┆ 74 │

│ Employee02 ┆ August ┆ 89 │

│ … ┆ … ┆ … │

│ Employee02 ┆ November ┆ 79 │

│ Employee03 ┆ August ┆ 51 │

│ Employee03 ┆ September ┆ 71 │

│ Employee03 ┆ October ┆ 44 │

│ Employee03 ┆ November ┆ 90 │

└─────────────┴───────────┴───────┘

employee_info:shape: (3, 3)

┌───────────┬─────────────┬─────────────────────┐

│ Name ┆ employee_ID ┆ email │

│ --- ┆ --- ┆ --- │

│ str ┆ str ┆ str │

╞═══════════╪═════════════╪═════════════════════╡

│ Lao Li ┆ Employee01 ┆ zdl361@126.com │

│ Lao Zhang ┆ Employee02 ┆ LaoZhang@gmail.com │

│ Lao Wang ┆ Employee03 ┆ LaoWang@hotmail.com │

└───────────┴─────────────┴─────────────────────┘

after join:shape: (12, 5)

┌─────────────┬───────────┬───────┬───────────┬─────────────────────┐

│ employee_ID ┆ date ┆ score ┆ Name ┆ email │

│ --- ┆ --- ┆ --- ┆ --- ┆ --- │

│ str ┆ str ┆ i32 ┆ str ┆ str │

╞═════════════╪═══════════╪═══════╪═══════════╪═════════════════════╡

│ Employee01 ┆ August ┆ 83 ┆ Lao Li ┆ zdl361@126.com │

│ Employee01 ┆ September ┆ 24 ┆ Lao Li ┆ zdl361@126.com │

│ Employee01 ┆ October ┆ 86 ┆ Lao Li ┆ zdl361@126.com │

│ Employee01 ┆ November ┆ 74 ┆ Lao Li ┆ zdl361@126.com │

│ Employee02 ┆ August ┆ 89 ┆ Lao Zhang ┆ LaoZhang@gmail.com │

│ … ┆ … ┆ … ┆ … ┆ … │

│ Employee02 ┆ November ┆ 79 ┆ Lao Zhang ┆ LaoZhang@gmail.com │

│ Employee03 ┆ August ┆ 51 ┆ Lao Wang ┆ LaoWang@hotmail.com │

│ Employee03 ┆ September ┆ 71 ┆ Lao Wang ┆ LaoWang@hotmail.com │

│ Employee03 ┆ October ┆ 44 ┆ Lao Wang ┆ LaoWang@hotmail.com │

│ Employee03 ┆ November ┆ 90 ┆ Lao Wang ┆ LaoWang@hotmail.com │

└─────────────┴───────────┴───────┴───────────┴─────────────────────┘

Data Pivots

Data pivots transform data from a long format to a wide format and apply aggregation functions.

#![allow(unused)] fn main() { // Add "pivot" to the polars features in cargo.toml. let mut employee_df: DataFrame = df!( "Name" => ["Lao Li", "Lao Li", "Lao Li", "Lao Li", "Lao Zhang", "Lao Zhang", "Lao Zhang", "Lao Zhang", "Lao Wang", "Lao Wang", "Lao Wang", "Lao Wang"], "employee_ID" => ["Employee01", "Employee01", "Employee01", "Employee01", "Employee02", "Employee02", "Employee02", "Employee02", "Employee03", "Employee03", "Employee03", "Employee03"], "date" => ["August", "September", "October", "November", "August", "September", "October", "November", "August", "September", "October", "November"], "score" => [83, 24, 86, 74, 89, 59, 48, 79, 51, 71, 44, 90] )?; use polars_lazy::frame::pivot::pivot; let out = pivot(&employee_df, ["date"], Some(["Name", "employee_ID"]), Some(["score"]), false, None, None)?; println!("long format:\n{}\nwide format:\n{}", employee_df, out); }

Output

long format:

shape: (12, 4)

┌───────────┬─────────────┬───────────┬───────┐

│ Name ┆ employee_ID ┆ date ┆ score │

│ --- ┆ --- ┆ --- ┆ --- │

│ str ┆ str ┆ str ┆ i32 │

╞═══════════╪═════════════╪═══════════╪═══════╡

│ Lao Li ┆ Employee01 ┆ August ┆ 83 │

│ Lao Li ┆ Employee01 ┆ September ┆ 24 │

│ Lao Li ┆ Employee01 ┆ October ┆ 86 │

│ Lao Li ┆ Employee01 ┆ November ┆ 74 │

│ Lao Zhang ┆ Employee02 ┆ August ┆ 89 │

│ … ┆ … ┆ … ┆ … │

│ Lao Zhang ┆ Employee02 ┆ November ┆ 79 │

│ Lao Wang ┆ Employee03 ┆ August ┆ 51 │

│ Lao Wang ┆ Employee03 ┆ September ┆ 71 │

│ Lao Wang ┆ Employee03 ┆ October ┆ 44 │

│ Lao Wang ┆ Employee03 ┆ November ┆ 90 │

└───────────┴─────────────┴───────────┴───────┘

wide format:

shape: (3, 6)

┌───────────┬─────────────┬────────┬───────────┬─────────┬──────────┐

│ Name ┆ employee_ID ┆ August ┆ September ┆ October ┆ November │

│ --- ┆ --- ┆ --- ┆ --- ┆ --- ┆ --- │

│ str ┆ str ┆ i32 ┆ i32 ┆ i32 ┆ i32 │

╞═══════════╪═════════════╪════════╪═══════════╪═════════╪══════════╡

│ Lao Li ┆ Employee01 ┆ 83 ┆ 24 ┆ 86 ┆ 74 │

│ Lao Zhang ┆ Employee02 ┆ 89 ┆ 59 ┆ 48 ┆ 79 │

│ Lao Wang ┆ Employee03 ┆ 51 ┆ 71 ┆ 44 ┆ 90 │

└───────────┴─────────────┴────────┴───────────┴─────────┴──────────┘

DataFrame API

| API | Description |

|---|---|

| df.get_column_index(name: &str) -> Option<usize> | Returns the index of the Series corresponding to the name |

| df.column(name: &str) -> Result<&Series, PolarsError> | Returns a reference to the Series based on the column name |

| df.select_at_idx(idx: usize) -> Option<&Series> | Returns a reference to the Series based on the index |

| df.select_by_range(range: R) -> Result<DataFrame, PolarsError> | Returns a new DataFrame based on the Range |

| df.columns(&self, names: I) -> Result<Vec<&Series>, PolarsError> | let sv: Vec<&Series> = df.columns(["Category", "Salary"])?; Returns Vec<&Series> |

| df.select(&self, selection: I) -> Result<DataFrame, PolarsError> | df.select(["Category", "Salary"]) returns a new DataFrame |

| df.get_columns() -> &[Series] | Returns a slice of all columns |

| df.take_columns() -> Vec<Series> | Takes ownership of all columns |

| df.get_column_names() -> Vec<&PlSmallStr> | References to all column names |

| df.get_column_names_owned(&self) -> Vec<PlSmallStr> | Clones and returns all column names, with ownership |

| df.set_column_names(names: I) | Sets column names |

| df.dtypes() -> Vec<DataType> | Returns the type of each field |

| df.filter(mask: &ChunkedArray<BooleanType>) -> Result<DataFrame, PolarsError> | Each element of the mask represents a row, filtering out rows where mask == true |

| df.take(indices: &ChunkedArray<UInt32Type>) -> Result<DataFrame, PolarsError> | Returns rows with specified indices. let idx = IdxCa::new("idx".into(), [0, 1, 9]); df.take(&idx) |

| df.slice(offset: i64, length: usize) -> DataFrame | Returns rows specified by the slice |

| df.rename(oldname: &str, newname: PlSmallStr) -> Result<&mut DataFrame, PolarsError> | Renames a field, df.rename("oldname", "newname".into()) |

| df.sort_in_place(by: impl IntoVec<PlSmallStr>, sort_options) -> Result<&mut DataFrame, PolarsError> | Sorts df in place, df retains the sorted result, df.sort(["col1", "col2"], Default::default()) |

| df.sort(by: impl IntoVec<PlSmallStr>, sort_options) -> Result<DataFrame, PolarsError> | Sorts df, returns a new sorted DataFrame, original variable remains unchanged |

| df.replace(column: &str, new_col: S) -> Result<&mut DataFrame, PolarsError> | Replaces the specified column with a new Series. The name of the new_col will be assigned to the specified name |

| df.with_column(new_col: IntoSeries) | Adds a column to df, if new_col's name already exists, it overwrites the old value |

| df.insert_column(index: usize, column: S) | Inserts a column at the specified index |

| df.hstack_mut(columns: &[Series]) | Adds multiple columns to df, modifying df |

| df.hstack(columns: &[Series]) | Adds multiple columns to df, returns a new DataFrame |

| df.get(idx) -> Option<Vec<AnyValue<'_>>> | Returns the specified row. Inefficient |

| df.with_row_index_mut(name: PlSmallStr, offset: Option | Adds an index column at the specified offset index, with column name name |

| df.schema() -> Schema<DataType> | Gets the structure of the DataFrame, including field names and field types |

| df.fields() -> Vec<Field> | Returns field information |

| df.estimated_size() | Gets heap memory usage in bytes |

| df.explode(columns: I) -> Result<DataFrame, PolarsError> | Unpacks a list Series to lines. See Splitting Strings into Multiple Lines, Unpack values wrapped in a list to lines |

| df.unnest(cols) | Unpack struct into multiple columns. See Splitting into Multiple Columns |

| df.group_by(["col1", "col2"…]) | Groups by specified columns |

| df.iter() | Creates an iterator by columns |

| df.shape() | Returns (height, width) |

| df.height() | Returns the height |

| df.width() | Returns the width |

| df.clear() | Clears the DataFrame |

| df.is_empty() | Checks if the DataFrame is empty |

| df.vstack(&self, other: &DataFrame) | Concatenates corresponding fields of two DataFrames, returning a new DataFrame. The field order, type, and column names of other and df must match exactly. It is recommended to call DataFrame::align_chunks after completing the vstack operation. |

| df.vstack_mut() | Same as vstack, but vstack_mut modifies df itself instead of returning a new DataFrame |

| df.pop() | Pops the last field and returns the popped Series |

| df.drop_in_place(name: &str) | Pops the specified field and returns the popped Series |

| df.drop(name: &str) | Returns a new DataFrame with the specified field removed |

| df.drop_many(names: I) | Deletes multiple fields, returning a new DataFrame with the specified fields removed |

| df.split_at(offset: i64) -> (DataFrame, DataFrame) | Splits at the specified row index |

| df.head(length: Option<usize>) | Returns a new DataFrame containing the first length rows of df |

| df.tail(length: Option<usize>) | Returns a new DataFrame containing the last length rows of df |

| df.unique | Removes duplicate rows, cannot retain original order |

| df.unique_stable | Removes duplicate rows, retains original order |

| let mut df2 = df1.unique_stable(Some(&["Element".into(), "id".into()]), UniqueKeepStrategy::First, None)?; | |

| df.unique(None, UniqueKeepStrategy::First, None)? | |

| df.is_unique | Checks if rows are unique |

| df.is_duplicated | Checks for duplicated rows |

| df.null_count() | Returns a new DataFrame where each field contains the null value count of the corresponding field in df |

Row Iteration

Recommended Method for Row Iteration

In data programming, you often know the field types explicitly. In such cases, you should use the downcasting functions like i8(), i16(), i32(), i64(), f32(), f64(), u8(), u16(), u32(), u64(), bool(), str(), binary(), decimal(), list() to extract references to the underlying ChunkedArray<T> of a Series. The type parsing operation only performs a type conversion on the underlying ChunkedArray<T> reference and does not copy the data. You need to ensure that the parsing function you call matches the underlying type T; otherwise, a runtime error will occur. See: Iterating over Series.

After extracting the element iterators for each field, use itertools::multizip to bind multiple iterators into one and then iterate over them. Here is the code to generate Person type values by iterating over DataFrame rows.

#![allow(unused)] fn main() { use polars::prelude::*; use itertools::multizip; #[derive(Debug)] pub struct Person { id: u32, name: String, age: u32, } let df = df!( "id" => &[1u32, 2, 3], "name" => &["John", "Jane", "Bobby"], "age" => &[32u32, 28, 45] ) .unwrap(); // take_columns() will take ownership of df, which is not necessary; df.columns can return references to specified fields. let objects = df.take_columns(); // Downcast fields and generate iterators let id_ = objects[0].u32()?.iter(); let name_ = objects[1].str()?.iter(); let age_ = objects[2].u32()?.iter(); // Use multizip to iterate over multiple iterators simultaneously let combined = multizip((id_, name_, age_)); let res: Vec<_> = combined.map( |(a, b, c)| { Person { id: a.unwrap(), name: b.unwrap().to_owned(), age: c.unwrap(), } }).collect(); print!("{:?}", res); }

Not Recommended Method for Row Iteration, AnyValue

In the Rust Polars library, Series is a dynamically typed data structure that can contain any type of data. When you use the df.get_row() method to get a row, the data is encapsulated into AnyValue one by one. Polars needs to determine the actual type of this element at runtime. AnyValue is an enumeration, and you need to use pattern matching to extract the value. This process requires some additional computation, so if you use the df.get_row() method in a loop, these extra computations can accumulate and significantly degrade the performance of your code.

Here is the code to generate Person type values by iterating over DataFrame rows using df.get_row.

#![allow(unused)] fn main() { use polars::prelude::*; #[derive(Debug)] pub struct Person { id: u32, name: String, age: u32, } let df = df!( "id" => &[1u32, 2, 3], "name" => &["John", "Jane", "Bobby"], "age" => &[32u32, 28, 45] ).unwrap(); let personlist_iter = (0..df.height()).into_iter().map( |x: usize| { let mut row_ = df.get_row(x).unwrap(); let mut row_iter = row_.0.into_iter(); // Extract the corresponding values using pattern matching if let (AnyValue::UInt32(id_), AnyValue::String(name_), AnyValue::UInt32(age_)) = (row_iter.next().unwrap(), row_iter.next().unwrap(), row_iter.next().unwrap()) { return Person { id: id_, name: name_.to_string(), age: age_, }; } else { panic!("bad value in df!"); } }); let person_list: Vec<Person> = personlist_iter.collect::<Vec<_>>(); println!("{:?}", person_list); }

LazyFrame

Similar to a DataFrame, a LazyFrame represents an abstraction of a DataFrame that is yet to be realized. A LazyFrame records the data source, data operations, and the data flow (whether it will generate a DataFrame or be written to disk via sink_csv). LazyFrames are parallel and operate in a streaming manner, which is particularly useful for handling large datasets that may not fit entirely in memory. LazyFrames can significantly reduce memory residency.

Constructing Lazyframe

A Lazyframe can be constructed by loading from a file using the APIs introduced in the IO Chapter. Alternatively, you can generate a corresponding Lazyframe by calling the lazy() method on a DataFrame.

#![allow(unused)] fn main() { let lf = df.lazy(); }

Indexing LazyFrame

Indexing in LazyFrame requires using expression syntax.

Column Indexing

In the select context, you can use the col() expression to select certain columns. cols(["date", "logged_at"]) selects specified names. col("*") or all() selects all columns. exclude(["logged_at", "index"]) excludes specified columns. * can be used as a wildcard.

#![allow(unused)] fn main() { // Single column selection let out = types_df.clone().lazy().select([col("place")]).collect()?; // Wildcard selection, selects all columns starting with 'a' let out = types_df.clone().lazy().select([col("a*")]).collect()?; // Regular expression let out = types_df.clone().lazy().select([col("^.*(as|sa).*$")]).collect()?; // Multiple column names selection let out = types_df.clone().lazy().select([cols(["date", "logged_at"])]).collect()?; // Data type selection, selects all columns that match the data types. let out = types_df.clone().lazy() .select([dtype_cols([DataType::Int64, DataType::UInt32, DataType::Boolean]).n_unique()]) .collect()?; // Alias to rename fields let df_alias = df.clone().lazy() .select([ (col("nrs") + lit(5)).alias("nrs + 5"), (col("nrs") - lit(5)).alias("nrs - 5")]) .collect()?; }

Row Indexing

Using LazyFrame Methods

#![allow(unused)] fn main() { let res: LazyFrame = types_df.clone().lazy().filter(col("id").gt_eq(lit(4))); // The filter parameter is an expression that returns a boolean array, rows with false values will be discarded. println!("{}", res.collect()?); let res: LazyFrame = types_df.clone().lazy().slice(1, 2); // Returns rows specified by the slice, a negative offset means counting from the end. .slice(-5,3) means selecting 3 elements starting from the 5th last element. }

Using Expressions for Row Indexing in Context

#![allow(unused)] fn main() { let res: LazyFrame = employee_df.clone().lazy().select( col("*").filter( col("value").gt_eq(lit(4)) ) ); let res: LazyFrame = employee_df.clone().lazy().select(col("*").slice(2,3)); }

In these examples, you can see how to use expressions to filter and slice rows and columns in a LazyFrame, providing flexible ways to manipulate data.

Expressions

Polars has a powerful concept called expressions (of type Expr). Polars expressions can be used in various contexts and essentially perform the function Fn(Series) -> Series. An Expr takes a Series as input and outputs a Series, allowing for chained calls.

#![allow(unused)] fn main() { col("foo").sort().head(2) }

The above snippet selects the column "foo", sorts it, and then takes the first two values from the sorted output. The power of expressions lies in the fact that each expression generates a new expression, and they can be chained, stored in variables, or passed as parameters. You can run expressions through Polars' execution context. Here, we run two expressions in the select context:

#![allow(unused)] fn main() { df.lazy() .select([ col("foo").sort(Default::default()).head(None), col("bar").filter(col("foo").eq(lit(1))).sum(), ]) .collect()?; }

Each independent Polars expression can run independently without needing the results of other expressions or interacting with them. Therefore, Polars may assign expressions to different threads or processors for simultaneous execution. Polars expressions are highly parallel. Understanding Polars expressions is a key step in learning Polars.

Contexts

Functions that can accept expressions are called contexts, and they include the following three types:

| Meaning | Code |

|---|---|

| Selection | df.select([..]) |

| Group Aggregation | df.groupby(..).agg([..]) |

| Horizontal Stacking (hstack) or Adding Columns | df.with_columns([..]) |

Expressions

Polars has a powerful concept called expressions (Expr type). Polars expressions can be used in various contexts and essentially perform the function Fn(Series) -> Series. An Expr takes a Series as input and outputs a Series. Therefore, Expr can be chained together.

#![allow(unused)] fn main() { col("foo").sort().head(2) }

The above snippet represents selecting the column "foo", sorting it, and then taking the first two values of the sorted output. The power of expressions lies in the fact that each expression produces a new expression, and they can be chained, stored in variables, or passed as parameters. You can run expressions through Polars' execution context. Here, we run two expressions in the select context:

#![allow(unused)] fn main() { df.lazy() .select([ col("foo").sort(Default::default()).head(None), col("bar").filter(col("foo").eq(lit(1))).sum(), ]) .collect()?; }

Each independent Polars expression can run independently without needing the results of any other expression or interacting with other expressions. Therefore, Polars may assign expressions to different threads or processors to execute simultaneously. Polars expressions are highly parallel. Understanding Polars expressions is a key step in learning Polars.

Contexts

Functions that can accept expressions are called contexts, and they include the following three types:

| Meaning | Code |

|---|---|

| Select | df.select([..]) |

| Group aggregation | df.groupby(..).agg([..]) |

| Horizontal stacking (hstack) or adding columns | df.with_columns([..]) |

Basic Operations

Expressions support basic operations such as +, -, *, /, <, and >.

#![allow(unused)] fn main() { let df_numerical = df .clone() .lazy() .select([ (col("nrs") + lit(5)).alias("nrs + 5"), (col("nrs") - lit(5)).alias("nrs - 5"), (col("nrs") * col("random")).alias("nrs * random"), (col("nrs") / col("random")).alias("nrs / random"), ]) .collect()?; println!("{}", &df_numerical); }

For logical comparisons, trigonometric functions, aggregations, and other operations, see Expression Methods.

Column Selection

In the context of select, you can use the col() expression to select certain columns.

col("*") or all() indicates selecting all columns. exclude(["logged_at", "index"]) indicates excluding specified columns. * can be used as a wildcard.

cols(["date", "logged_at"]) selects specified column names.

#![allow(unused)] fn main() { // Single column selection let out = df.clone().lazy().select([col("place")]).collect()?; // Wildcard selection, selecting all columns with names starting with 'a' let out = df.clone().lazy().select([col("a*")]).collect()?; // Regular expression let out = df.clone().lazy().select([col("^.*(as|sa).*$")]).collect()?; // Multiple column names selection let out = df.clone().lazy().select([cols(["date", "logged_at"])]).collect()?; // Data type selection, selecting all columns that satisfy the data type. let out = df.clone().lazy() .select([dtype_cols([DataType::Int64, DataType::UInt32, DataType::Boolean]).n_unique()]) .collect()?; }

Dataset generation for this section